Debunking the Myth That HTTPS Is Slow

Boosting Node.js server throughput with the right TLS cipher suites

by Joe Honton

by Joe Honton

Ken has been on a quest to squeeze as much juice out of his web server as possible. In this episode he compares server response times using different TLS cipher suites.

Recently, Ken finished converting all of his client's websites from HTTP to HTTPS. With his LetsEncrypt Adventures over, he had time on hand to think about web server performance. He decided to set up a test harness to benchmark his new server configurations. He was curious to see how much penalty he would incur by using various cipher suites.

Since his knowledge of cryptography was still rudimentary, he thought it best to review things before he got started. He summarized his understanding in Cipher Suites Demystified.

Test Harness

Ken set up his test harness using two Digital Ocean droplets, located in the same data center. One was the simulated test client, running the h2load stress testing utility. The other was the simulated test server, running the HTTP/2 Server.

The simulated test client was setup like this:

- Intel 1.8GHz CPU

- 1 GB RAM

- 2 Gpbs network interface

- 64-bit Debian 9.5

h2loadsoftware nghttp2/1.39.0-DEV

The simulated test server was setup like this:

- 6 Intel 2.3GHz CPU

- 16 GB RAM

- 2 Gpbs network interface

- 64-bit Fedora 29

- Read Write Tools HTTP/2 Server 1.0.27

- Node.js 10.13.0

- openssl 1.1.0j

Because the client and server were co-located, network latency was negligible.

The h2load stress testing tool simulated 128 clients simultaneously and repeatedly requesting a 4K static file from the server. Each test began with a 10-second warm-up period, followed by a 10-second measurement period.

Results were measured as throughput, in number of requests fulfilled per second. The total number of requests, total memory consumption, and network bandwidth were captured. The average throughput, stated as requests per second was calculated by dividing the total number of requests completed, by the duration (10 seconds).

Round 1 Results

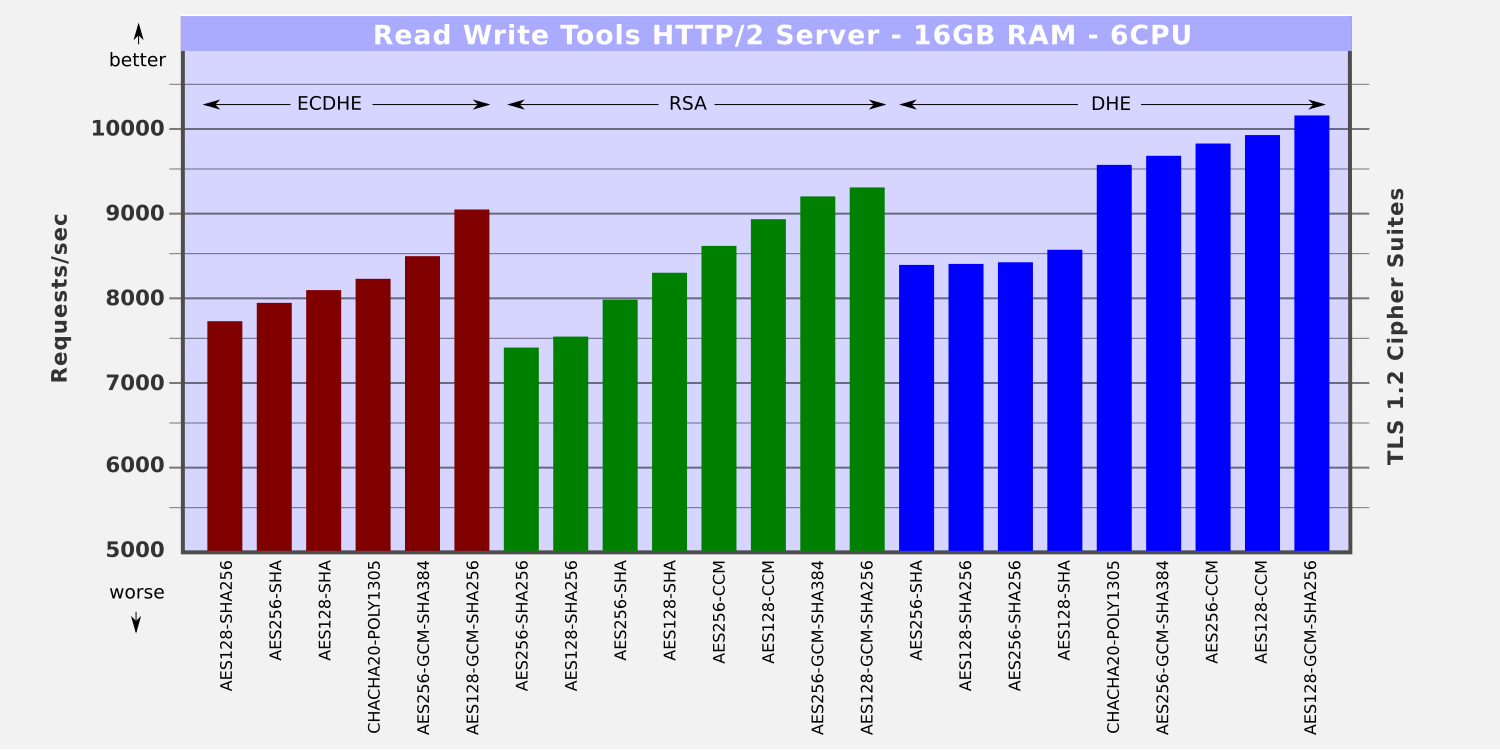

A total of 23 cipher suites were benchmarked. For convenience, they were grouped according to their type of digital signature algorithm:

- ECDHE Elliptic-curve Diffie–Hellman (6 cipher suites)

- RSA Rivest–Shamir–Adleman (8 cipher suites)

- DHE Diffie-Hellman Ephemeral (9 cipher suites)

All of the tests performed extremely well. The myth that HTTPS is slow, was amply debunked.

The throughput ranged from 7,302 reqs/sec to 10,142 reqs/sec, a 39% increase from slowest to fastest.

The best performers were the ones using GCM (AES Galois/Counter mode) for symmetric encryption/decryption. Second best were the ones using CCM (AES Counter with CBC-MAC). Google's ChaCha20 placed just behind them, but well ahead of the losers. Each of these uses AEAD (authenticated encryption with associated data), which is a fancy way of saying that the integrity check is performed together with the encryption.

The AES (Advanced Encryption Standard) ciphers suites performed consistently worse. These are the ones that use a separate HMAC integrity check after the actual encryption. Sadly, the SHA256 (256-bit) variants under-performed the older and no longer recommended SHA (160-bit) variants.

Round 2 Results

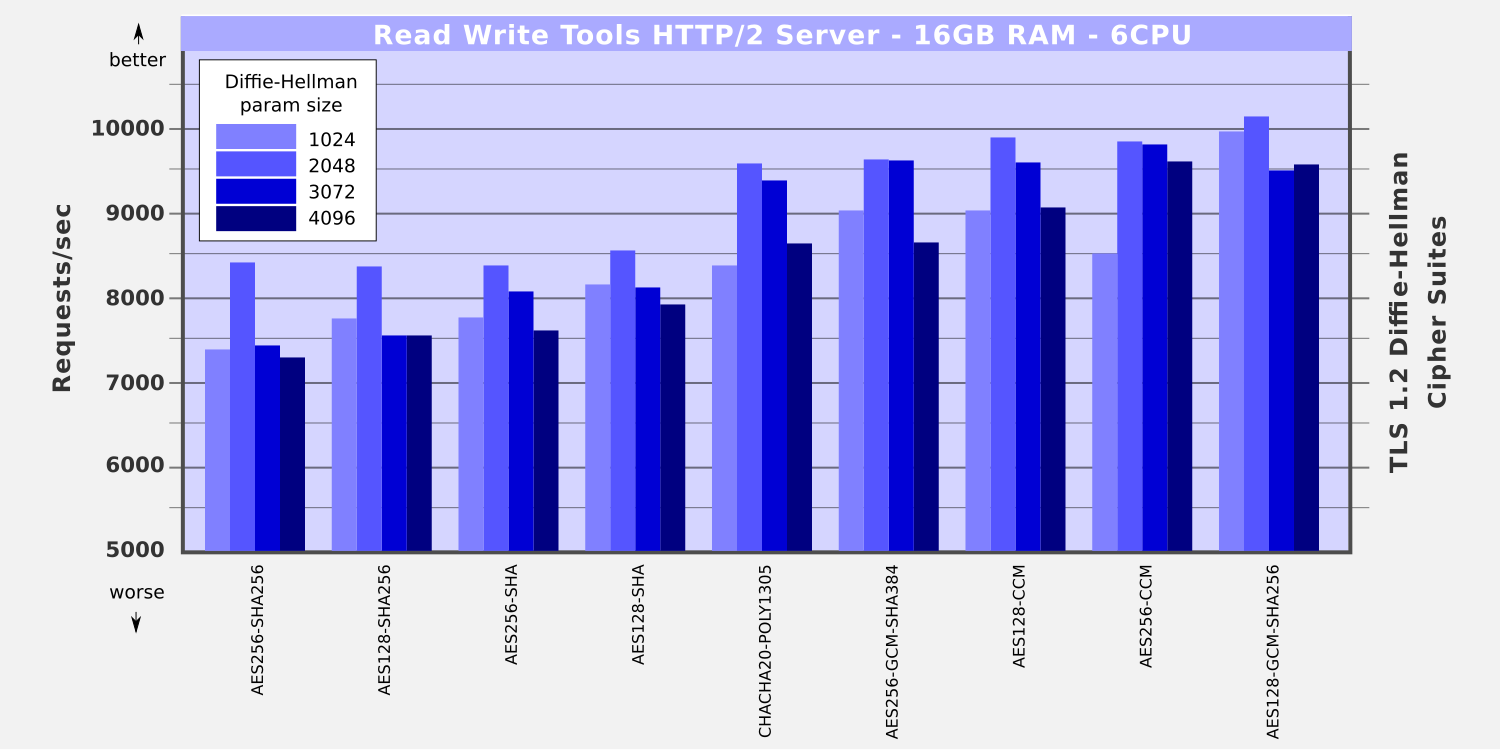

With the DHE cipher suites performing so well, Ken decided to examine the affect that dhparam size has on throughput. The DHE algorithm uses a prime parameter and a generator parameter, supplied by the server, during key exchange. These can be different strengths. For his tests, Ken used four dhparam bit sizes: 1024, 2048, 3072, and 4096. (The older 512-bit size has been broken by brute force, so it is no longer safe to use.)

Larger bit sizes mean larger prime numbers have to be calculated during the handshake. That affects the time it takes for the initial TLS handshake. The results however did not bear that out. The 2048-bit size was consistently the best performer, doing significantly better than the smaller 1024-bit size. Ken could find no explanation for this.

Dear reader,

If you're friends with Alice and Bob, could you try explaining why the 2048-bit dhparam worked best?

ECDSA certificates

Ken obtained his server's certificate from LetsEncrypt, which at this time (2019) only signs RSA type certs. The newer ECDSA certs are yet not available. Each cert type must follow a chain of authority up to the root that uses the same algorithm.

When the ECDSA certs become available through LetsEncrypt, he will need to benchmark them as well.

HTTP/2 Server Configuration

Ken configured his server to use his newfound benchmark results. He chose to go with performance over security. He figured that it's still necessary to support older TLS clients for a little while longer, so he included the 160-bit SHA HMAC suites as well. This is what his RWSERVE configuration file looked like:

server {

ip-address 8.16.32.64

port 443

cluster-size 6

diffie-hellman `/etc/rwserve/tls/diffie-hellman-2048.pem`

ciphers {

DHE-RSA-AES128-GCM-SHA256

DHE-RSA-AES128-CCM

DHE-RSA-AES256-CCM

DHE-RSA-AES256-GCM-SHA384

DHE-RSA-CHACHA20-POLY1305

AES128-GCM-SHA256

AES256-GCM-SHA384

ECDHE-RSA-AES128-GCM-SHA256

AES128-CCM

AES256-CCM

DHE-RSA-AES128-SHA

ECDHE-RSA-AES256-GCM-SHA384

DHE-RSA-AES256-SHA256

DHE-RSA-AES128-SHA256

DHE-RSA-AES256-SHA

AES128-SHA

ECDHE-RSA-CHACHA20-POLY1305

ECDHE-RSA-AES128-SHA

AES256-SHA

ECDHE-RSA-AES256-SHA

ECDHE-RSA-AES128-SHA256

AES128-SHA256

AES256-SHA256

}

}

Restart the server and done! A 39% throughput boost just by configuring his server.

Wow! And 10,000 requests per second really are possible!

Sweet!

Ken was once again at the top of his game. Hmm. Maybe it was time to spruce up his LinkedIn profile.

No minifig characters were harmed in the production of this Tangled Web Services episode.

Follow the adventures of Antoní, Bjørne, Clarissa, Devin, Ernesto, Ivana, Ken and the gang as Tangled Web Services boldly goes where tech has gone before.